Posts Tagged XML

DITA – A framework for scientific publishing?

Posted by peanutbutter in data standards, Journals Publishing on February 1, 2011

There are two industry recognised standards for XML based documentation. These are Docbook and DITA (Darwin Information Typing Architecture).

Docbook is the older of the two specifications and created specifically for technical documentation. DITA, is a younger specification which grew out of IBM, and is referred to as having its own architecture and was designed to provide structure to more than just a book. Both specifications are OASIS standards.

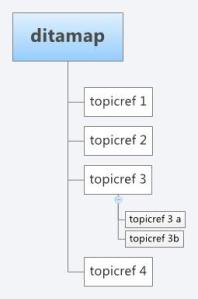

As with XML schemas, both specifications can be extended to include bespoke features. However, Docbook is based more on a book structure with Sections and subsections, where as DITA is built around topics that can be built up in any arrangement based on a document map. A DITA topic is open to specialisation itself, however, a topic has only three required elements

- An id attribute

- A title

- A body

- ,

A topic can exist as a single XML file which can be composed into any arrangement for publication through the use of a document map. A DITA structure would present a more flexible architecture where the same “topic”, i.e a journal article section, such as an abstract, materials and methods, or results, could be included with ease more than one publication, correctly referenced. In this respect DITA is more like an object-oriented document schema, and can be more easily repurposed (in terms of structure) for any output format (i.e pdf, HTML). In the same respect, Docbook can be configured with some work to behave on a more topic by topic basis and DITA can support a book based methodology. They are after all both XML schemas and are equally extensible or open to specialisation.

As its a standard, whole ecosystems have emerged which makes use of the DITA architecture. For example, DITA for publishers provides libraries to convert DITA markup into HTML, PDF, EPUB, and Kindle rendering support. This allows content structures in DITA to be repurposed for different audiences or different devices with relative ease.

I have recently started using DITA as an architecture to represent content, primarily designed for books. However, with new demands appearing for different delivery mechanisms of the traditional textbook, such as Web delivery and ebooks, DITA is proving to be immensely powerful to deliver the same content through different mediums with relative ease and speed. In using it, it seems obvious that a DITA architecture would benefit the representation of content within a journal article, allowing references re-purposing and multiple format delivery. Maybe a topic for discussion through the Beyond the PDF forum.

In the end, it’s just XML, so I wont repeat the virtues of content markup through XML. However, for me its main advantage is the object oriented -like topic structure as a working architecture.

Related articles

- Using RDFa with DITA and DocBook (devx.com)

- Dita Educational Use cases (docs.oasis-open.org)

- Converting documents between a wiki and Word, XML, FrameMaker or other help formats (ffeathers.wordpress.com)

- Future-proofing e-books with XML (teleread.com)

- The PDF Landscape for DITA Content (tc.eserver.org)

The Triumvirate of Scientific Data

Posted by peanutbutter in bioinformatics, data standards, ontology, open data on October 30, 2008

|

In a recent Nature editorial entitled Standardizing data, several projects were highlighted that are forfeiting there chances of winning a Nobel prize (according to Quackenbush) and championing the blue collar science of data standardization.in the life-sciences.

I wanted to take the article a step further highlight three significant properties of scientific data that I believe to be fundamental in considering how to curate, standardize or simply represent scientific data; from primary data, to lab books, to publication. These significant properties of scientific data are the content, syntax, and semantics, or more simply put -What do we want to say? How do we say it? What does it all mean? These three significant properties of data are what I refer to as the Triumvirate of scientific data.

Content: What do we want to say?

Data Content is defined as the items, topics or information that is “contained in” or represented by a data object. What is, should or must be said. Generic data content standards exists, such as Dublin Core, as well as more focused or domain specific standards. Most aspects of the research life-cycle have a content standard. For example, when submitting a manuscript to a scientific publisher you are required to conform to a content standard for that Journal. For example, PlosOne calls their content standard Criteria for Publication and lists seven points to conform to.

The Minimum Information about [insert favourite technology] are efforts by the relevant communities to define content standards for their experiments. These do (should) not define how the content is represented (in a database or file format) rather they state what information is required to describe an experiment. Collecting and defining content standards for the life-sciences is the premise of the MIBBI project.

Syntax: How do we say it?

The content of data is independent of any structure, language implementation or semantics. For example when viewing a journal article on Biomed central you typically have the option to view or download the “Full Text” which is often represented in HTML or you have the option of viewing the PDF file or XML. Each representation has the same scientific content to a human but is structured and then rendered (or “presented”) to the user in three different syntax.

The majority of the structural of syntactic representation of scientific data is largely database centric. However, alternative methods can be identified such as Wikis (OpenWetWare, UsefulChem), Blogs (LaBLog), XML, (GelML), RDF (UniProt export) or described as a data model (FuGE) which can be realised in multiple syntax

Semantics: What do we mean?

The explicit meaning of data is very difficult to get right and is a difficult problem in the life-sciences. One word can have many meanings and one meaning can be described by many words. A good example of a failure to correctly determine the semantics of data is described in the paper by Zeeberg et al 2004. In the paper they describe the mis-interpretation of the semantics of gene names. This mis-interpretation of semantics resulted in an irreversible conversion to date-format by Excel and which percolated through to the curated LocusLink public repository.

Within the life-sciences the issue of semantics is being addressed via the use of Controlled vocabularies and ontologies.

According to the Neurocommons definition; A controlled vocabulary is an association between formal names (identifiers) and their definitions. A ontology is a controlled vocabulary augmented with logical constraints that describe their interrelationships. Not only do we need semantics for data, we need shared semantics, so that we are able to describe data consistently, within laboratories, across collaborations and transcending scientific domains. The OBO Foundry is one of the projects tasked with fostering the orthogonal development of ontologies – one term only appears in one ontology and is referenced by others – with the goal of shared semantics.

Summary

When considering how to curate, standardize or represent scientific data, either internally within laboratories, or externally for publication, the three significant properties of content, syntax and semantics should be considered carefully for the specific data. Consistent representation of data conforming to the Triumvirate of scientific data will provide a platform for the dissemination, interpretation, evaluation and advancement of scientific knowledge.

Acknowledgments

Thanks to Phil Lord for helpful discussions on the Triumvirate of data

Conflict of interest

I am involved in the MIBBI project, the development of GelML and a member of the OBO Foundry via the OBI project.

![Reblog this post [with Zemanta]](https://i0.wp.com/img.zemanta.com/reblog_e.png)